Compare commits

668 Commits

quick-expo

...

v4.5.2-bet

| Author | SHA1 | Date | |

|---|---|---|---|

|

|

8aa185135a | ||

|

|

9ee60d1d49 | ||

|

|

3e4e8ed350 | ||

|

|

7f750077dd | ||

|

|

5752eaa2b4 | ||

|

|

337bccb488 | ||

|

|

2022b4e5ea | ||

|

|

410d523f8a | ||

|

|

6e50979045 | ||

|

|

22054cad12 | ||

|

|

3f06e7fda3 | ||

|

|

09f98c2442 | ||

|

|

cca0a6900e | ||

|

|

1a8303ca76 | ||

|

|

9690ddb32b | ||

|

|

a18549cf5b | ||

|

|

3c30dd59e4 | ||

|

|

91c4757cf8 | ||

|

|

53687f2235 | ||

|

|

71b89431ae | ||

|

|

0795eab05b | ||

|

|

b8ac4a5a06 | ||

|

|

75e93ec11e | ||

|

|

3a810e5bb5 | ||

|

|

e2376f553a | ||

|

|

916eb697de | ||

|

|

2cff55b12e | ||

|

|

00f1204bf0 | ||

|

|

28db13c995 | ||

|

|

5970abc85e | ||

|

|

283eeb7de5 | ||

|

|

d52e7a3b9f | ||

|

|

1b551a8665 | ||

|

|

5843ef458d | ||

|

|

c9c962abce | ||

|

|

397fbb9832 | ||

|

|

ebaa4fe4a6 | ||

|

|

c0dc179140 | ||

|

|

c4aa63eab5 | ||

|

|

e61a672209 | ||

|

|

60faaf40d5 | ||

|

|

bcda12db90 | ||

|

|

232b52d939 | ||

|

|

b5f66309ae | ||

|

|

f4bfe234a1 | ||

|

|

008a9b4d6d | ||

|

|

b9517b8490 | ||

|

|

cb8d35c5c3 | ||

|

|

9250f2baaf | ||

|

|

1e2ca1e297 | ||

|

|

61aa257992 | ||

|

|

952d1d6343 | ||

|

|

1eb7a3271e | ||

|

|

bd0dcc979d | ||

|

|

13531c2f3a | ||

|

|

6765de0c38 | ||

|

|

1c5fce1be1 | ||

|

|

866b8fdc25 | ||

|

|

fb9069efe8 | ||

|

|

1494fe3078 | ||

|

|

d52c69d746 | ||

|

|

f6f0108e17 | ||

|

|

7ba8ef01c5 | ||

|

|

579d72f03a | ||

|

|

020382a153 | ||

|

|

dae7e38179 | ||

|

|

69b1cdc964 | ||

|

|

9f3f4bdbd4 | ||

|

|

250d52131c | ||

|

|

17dbc6cc67 | ||

|

|

d7ad9be560 | ||

|

|

0b81ea8f4e | ||

|

|

064a5f5564 | ||

|

|

0d7accc990 | ||

|

|

10e2d3c632 | ||

|

|

13c32d4063 | ||

|

|

df4c8d2698 | ||

|

|

3f0e6591fb | ||

|

|

cd48a1dfc5 | ||

|

|

168866743b | ||

|

|

40ad9805b6 | ||

|

|

8a6275250e | ||

|

|

5e08421c98 | ||

|

|

ed616130b8 | ||

|

|

c2e652adfc | ||

|

|

5dc5c34af3 | ||

|

|

88469e7366 | ||

|

|

15de3600c3 | ||

|

|

379166c66c | ||

|

|

e07e35c104 | ||

|

|

c0779f1260 | ||

|

|

1e59182fda | ||

|

|

fd54c176eb | ||

|

|

4a495377fd | ||

|

|

66cac0665d | ||

|

|

92d160b077 | ||

|

|

da536adca3 | ||

|

|

8b06d3be15 | ||

|

|

2ade7e9a68 | ||

|

|

8d3c8184ce | ||

|

|

7126eec4f0 | ||

|

|

0ff59e626e | ||

|

|

473f1b0e54 | ||

|

|

e5993136ab | ||

|

|

b323f9c322 | ||

|

|

1fcd13e9ff | ||

|

|

11f6b82b72 | ||

|

|

94553504a7 | ||

|

|

1ea9c23576 | ||

|

|

991b2fd3c1 | ||

|

|

c22a6b48f1 | ||

|

|

22295ceef2 | ||

|

|

576987ad8c | ||

|

|

72c121a5c1 | ||

|

|

8358026a2f | ||

|

|

266d7d76de | ||

|

|

3ffe2090e5 | ||

|

|

9d54a82330 | ||

|

|

cc02540c5f | ||

|

|

ffc67572fa | ||

|

|

9a946c1eca | ||

|

|

c47401fb2c | ||

|

|

b941b4d621 | ||

|

|

a409c14b30 | ||

|

|

07685280e4 | ||

|

|

7a7d48bd0e | ||

|

|

6a7a56886c | ||

|

|

a686e21c07 | ||

|

|

de692a3434 | ||

|

|

e5404d2f29 | ||

|

|

0252091158 | ||

|

|

ed3666ca05 | ||

|

|

4a54dc5b61 | ||

|

|

9c21452d0b | ||

|

|

fac5cdac75 | ||

|

|

1f00d06588 | ||

|

|

568e60c52e | ||

|

|

8e75884556 | ||

|

|

ab4aef6c7e | ||

|

|

0e95082be6 | ||

|

|

185cfab5d8 | ||

|

|

6dcbb5e308 | ||

|

|

24071ebde7 | ||

|

|

2ff9e8c452 | ||

|

|

318b137490 | ||

|

|

2ace0bdb34 | ||

|

|

05ea435820 | ||

|

|

f9c54cdce2 | ||

|

|

148af24b2c | ||

|

|

f0e0fb8f64 | ||

|

|

a108fb749a | ||

|

|

6a0513f1ff | ||

|

|

ab3648c5ca | ||

|

|

3d841ef8fe | ||

|

|

588b8b23f9 | ||

|

|

e636987f31 | ||

|

|

0a7c56dace | ||

|

|

1fdf942715 | ||

|

|

bef6efdbdb | ||

|

|

1627cca29e | ||

|

|

2f88e38f97 | ||

|

|

3719150444 | ||

|

|

4f5856bcec | ||

|

|

72ad14c154 | ||

|

|

3c8cbab90c | ||

|

|

ccb25c9ff0 | ||

|

|

5e5a26ed4d | ||

|

|

13f277a1ea | ||

|

|

f016ece2af | ||

|

|

42d7166d2b | ||

|

|

6930a2543c | ||

|

|

c1e7314df1 | ||

|

|

ec94b99f4b | ||

|

|

2309f99dad | ||

|

|

44e21844ab | ||

|

|

f59ac66e78 | ||

|

|

f1e35689bb | ||

|

|

cd2aa8adab | ||

|

|

cd503efaac | ||

|

|

765ec3ed00 | ||

|

|

3e11adb40d | ||

|

|

13aae388e8 | ||

|

|

0d00df1226 | ||

|

|

50a965c913 | ||

|

|

1eba71fa8c | ||

|

|

700571c913 | ||

|

|

e8b32ca30e | ||

|

|

75d3160fdf | ||

|

|

4dae581438 | ||

|

|

1a0d5457ce | ||

|

|

7ad845d5c6 | ||

|

|

2679062b95 | ||

|

|

140b01946c | ||

|

|

77ca6aedb3 | ||

|

|

a0be0d59e3 | ||

|

|

3d4ef1da4a | ||

|

|

fd531cfd1f | ||

|

|

60333cbbd7 | ||

|

|

1cf4dc0013 | ||

|

|

7d5f7791db | ||

|

|

092ed25032 | ||

|

|

938019e90e | ||

|

|

8f4e9f9253 | ||

|

|

ad2c293f6f | ||

|

|

83544170f3 | ||

|

|

bd92e86216 | ||

|

|

014274f4c6 | ||

|

|

4958c49147 | ||

|

|

82ae588d9a | ||

|

|

5cc78c4e50 | ||

|

|

a96851b38f | ||

|

|

bcf95d3872 | ||

|

|

43cdf9fab1 | ||

|

|

177f6eaed5 | ||

|

|

826410e5ea | ||

|

|

fca00ed248 | ||

|

|

3108fb60f9 | ||

|

|

6bd48ca29f | ||

|

|

c113266095 | ||

|

|

7ad2066aa6 | ||

|

|

9e326b7893 | ||

|

|

0c4c30f75c | ||

|

|

83a40db2da | ||

|

|

b448de190d | ||

|

|

04901b438d | ||

|

|

f690446cee | ||

|

|

46ab02f914 | ||

|

|

7c8fe2a788 | ||

|

|

6f35bd5577 | ||

|

|

aa6a5028bb | ||

|

|

45d4569d97 | ||

|

|

e51d420fa9 | ||

|

|

755cb5d6f3 | ||

|

|

b4e777018e | ||

|

|

de7275c38b | ||

|

|

50dbb9d1bd | ||

|

|

5ca54220b5 | ||

|

|

a4e967f37e | ||

|

|

454827baaa | ||

|

|

c39f11e2da | ||

|

|

86195b56ed | ||

|

|

e0d4c7844e | ||

|

|

bd12cd5c07 | ||

|

|

7575b59f4f | ||

|

|

5180e7ad27 | ||

|

|

8a13d88c3e | ||

|

|

2649a0174d | ||

|

|

1da8c4ca2c | ||

|

|

ea42b2bce1 | ||

|

|

94b1a25252 | ||

|

|

7522f87066 | ||

|

|

4751e4930e | ||

|

|

c31dd3ced9 | ||

|

|

536897a84c | ||

|

|

368993597c | ||

|

|

42a1bfeeed | ||

|

|

79deab948b | ||

|

|

b6cf73e292 | ||

|

|

1c69c0bff7 | ||

|

|

fce3e3626a | ||

|

|

26b8ca79d4 | ||

|

|

7edb397741 | ||

|

|

c546987fbf | ||

|

|

476946249b | ||

|

|

9c1a6e220a | ||

|

|

fa648ca675 | ||

|

|

3297253906 | ||

|

|

40d53275e3 | ||

|

|

d4f4211ee4 | ||

|

|

91c30b7ad8 | ||

|

|

1b6dada408 | ||

|

|

a84c2bdd12 | ||

|

|

3445495f38 | ||

|

|

6304bf5564 | ||

|

|

d0705ec83e | ||

|

|

8fd2c78b6a | ||

|

|

9dcc235f5a | ||

|

|

cec0130cba | ||

|

|

a9216eda89 | ||

|

|

5de231dc1c | ||

|

|

4f9566e630 | ||

|

|

aeea29f61d | ||

|

|

b9eb0da64e | ||

|

|

b416dcc844 | ||

|

|

3dc9a05706 | ||

|

|

49081f0066 | ||

|

|

4426898e90 | ||

|

|

c3587ff4cc | ||

|

|

4914c815c0 | ||

|

|

26cc5d6d1e | ||

|

|

1351efe992 | ||

|

|

51c490218a | ||

|

|

6a606bc733 | ||

|

|

a5bc66eb27 | ||

|

|

bb56c01b55 | ||

|

|

13030defc1 | ||

|

|

4d7339d085 | ||

|

|

94ace9ff1c | ||

|

|

38db55d5c0 | ||

|

|

30e3295d3e | ||

|

|

83f25937da | ||

|

|

96809e226d | ||

|

|

731a0b5d64 | ||

|

|

4f01bc5bcb | ||

|

|

f010e7c934 | ||

|

|

6f7452ab6d | ||

|

|

4f8844d989 | ||

|

|

85b16dc56d | ||

|

|

0084cbcb63 | ||

|

|

c1a9641ce5 | ||

|

|

fb01b4b3f7 | ||

|

|

780c1321e6 | ||

|

|

3597bec7c4 | ||

|

|

37f0dc8b32 | ||

|

|

94b6ec3a56 | ||

|

|

f8fcf266f6 | ||

|

|

bb67925f6f | ||

|

|

e091d473a4 | ||

|

|

476eda2fc5 | ||

|

|

5d5810b9eb | ||

|

|

0301fe5f37 | ||

|

|

9512d04438 | ||

|

|

475bc39819 | ||

|

|

eb16658f4c | ||

|

|

39ee26e3f1 | ||

|

|

74730b6d60 | ||

|

|

f68744fba7 | ||

|

|

1d90cd25c4 | ||

|

|

ebafc41c32 | ||

|

|

63bebc67a2 | ||

|

|

029e451918 | ||

|

|

bb9eacf38e | ||

|

|

4260497900 | ||

|

|

c042a64b04 | ||

|

|

f1190400a5 | ||

|

|

240af66809 | ||

|

|

d792744bfb | ||

|

|

54b476fc27 | ||

|

|

ea1208d0ad | ||

|

|

52e8831bcc | ||

|

|

1f821fc654 | ||

|

|

ec5b887e78 | ||

|

|

bde2cdb09f | ||

|

|

7fe5c354f1 | ||

|

|

45501c82eb | ||

|

|

d3c7a04d9d | ||

|

|

d2299ab926 | ||

|

|

b9da035a97 | ||

|

|

4faaaa1eb7 | ||

|

|

0fd4084fac | ||

|

|

423bd3f223 | ||

|

|

fc984da9d3 | ||

|

|

e0aeae5220 | ||

|

|

6baeb58500 | ||

|

|

d8cd3a78e4 | ||

|

|

241c7746c2 | ||

|

|

25fe1df43b | ||

|

|

6bcc83d920 | ||

|

|

aaf6ad8ab0 | ||

|

|

acbc2ea268 | ||

|

|

e3ef332881 | ||

|

|

dcfc695678 | ||

|

|

f60673fe36 | ||

|

|

42c0e1d8cb | ||

|

|

fa70d7d1bd | ||

|

|

73b338d38a | ||

|

|

1765ab4118 | ||

|

|

a462a56a9d | ||

|

|

337ad2968f | ||

|

|

fad37e9634 | ||

|

|

6269171f5d | ||

|

|

17286e0c3e | ||

|

|

336edfc93f | ||

|

|

5484dc8ace | ||

|

|

36d3fa12f9 | ||

|

|

342119c953 | ||

|

|

3ff7c7164a | ||

|

|

33e223eea1 | ||

|

|

7d5fceb6ff | ||

|

|

482ecd7c38 | ||

|

|

55fb7ba8bb | ||

|

|

81b1cda8c5 | ||

|

|

ea84ec3439 | ||

|

|

b474bd109b | ||

|

|

819fdac8ab | ||

|

|

e203d34aed | ||

|

|

4c62f22f6f | ||

|

|

a9b201e1cb | ||

|

|

84832472a2 | ||

|

|

2015b330be | ||

|

|

902267f5eb | ||

|

|

bf315c53ac | ||

|

|

96afe1b0d6 | ||

|

|

c571582ed8 | ||

|

|

50a22b63d0 | ||

|

|

79f7d1d4bc | ||

|

|

04902c2d9f | ||

|

|

a25af4714e | ||

|

|

c8e46f774e | ||

|

|

0034ce2378 | ||

|

|

4f4e3cbcc3 | ||

|

|

7eff7c649c | ||

|

|

b8f08127f0 | ||

|

|

5bb9f181d8 | ||

|

|

2231bc21cd | ||

|

|

ee1c51e9f8 | ||

|

|

5ed441aada | ||

|

|

4c8a814ba5 | ||

|

|

2e196178ab | ||

|

|

8f54a6b03d | ||

|

|

30cbcd5ce0 | ||

|

|

223dd0b22f | ||

|

|

be1c1075b5 | ||

|

|

cb64a43a78 | ||

|

|

01681b9d87 | ||

|

|

fa2bb52007 | ||

|

|

aeafa81cb2 | ||

|

|

0eabd81909 | ||

|

|

cd3a3033d7 | ||

|

|

a3a9d00267 | ||

|

|

d0795502e6 | ||

|

|

1908cb1210 | ||

|

|

0f1cc85423 | ||

|

|

1bfa004e65 | ||

|

|

ff6d56cf6a | ||

|

|

901130f3bd | ||

|

|

78f770de7d | ||

|

|

e3aa4d54ef | ||

|

|

c50a4cdeff | ||

|

|

16fb7a926d | ||

|

|

77878e4ad1 | ||

|

|

4ee66a55c6 | ||

|

|

6a41c780f4 | ||

|

|

3b4a3b9ef7 | ||

|

|

f3625aaf71 | ||

|

|

beef215394 | ||

|

|

b551db8774 | ||

|

|

1546893cb6 | ||

|

|

20ff374438 | ||

|

|

111702881a | ||

|

|

17efc3d32c | ||

|

|

c93594d8ca | ||

|

|

57fdaf5073 | ||

|

|

b332cff588 | ||

|

|

1a06cd2d22 | ||

|

|

cdfbd73cc5 | ||

|

|

58666fd4ec | ||

|

|

b5f22516b6 | ||

|

|

d953d1b342 | ||

|

|

0974c76fc6 | ||

|

|

e653b793d8 | ||

|

|

425bed050b | ||

|

|

50f8f84967 | ||

|

|

37c7be1d06 | ||

|

|

c2feedac20 | ||

|

|

0be07ba093 | ||

|

|

00c98b10b9 | ||

|

|

d81826214f | ||

|

|

95b86f437e | ||

|

|

99876e3158 | ||

|

|

97215e31e9 | ||

|

|

a1bf7ddb50 | ||

|

|

5ae33a320c | ||

|

|

2ef81942db | ||

|

|

571be4b3f9 | ||

|

|

12c9e638f5 | ||

|

|

2df98c610a | ||

|

|

f59c6c9fe5 | ||

|

|

5b4e69fa0a | ||

|

|

aad39227b7 | ||

|

|

2c2b4fc08f | ||

|

|

8910a6bd07 | ||

|

|

3b15d315ec | ||

|

|

1f8021833b | ||

|

|

e2ce2f7057 | ||

|

|

17a3fec158 | ||

|

|

8ef8685e4e | ||

|

|

1c8e69225d | ||

|

|

e7634a2521 | ||

|

|

a03682fc8a | ||

|

|

d185fe8a8c | ||

|

|

7e82e83faa | ||

|

|

f85460cce8 | ||

|

|

34fce0ef58 | ||

|

|

7699cca6b9 | ||

|

|

da5b96c0a8 | ||

|

|

b503ea9a02 | ||

|

|

c6c04b87e1 | ||

|

|

065af0b4a8 | ||

|

|

f92153d957 | ||

|

|

1d31e1665c | ||

|

|

8155813ee4 | ||

|

|

d60f052897 | ||

|

|

f664771643 | ||

|

|

402ac726f3 | ||

|

|

08961cc850 | ||

|

|

8363a887bc | ||

|

|

3e1f947922 | ||

|

|

760f5c9616 | ||

|

|

ba74f98015 | ||

|

|

b055fff6e0 | ||

|

|

ed17b425a3 | ||

|

|

87fe62005d | ||

|

|

fce2f9a46a | ||

|

|

bb86a3b8cc | ||

|

|

77c5192798 | ||

|

|

e5b47baee3 | ||

|

|

81fbed7a7f | ||

|

|

90262a0318 | ||

|

|

72633a0c0d | ||

|

|

246c54f863 | ||

|

|

68fd49c224 | ||

|

|

d179f4c753 | ||

|

|

80624d3abe | ||

|

|

02aa94afc9 | ||

|

|

1b853dd3b3 | ||

|

|

e715a95cc0 | ||

|

|

3ca0810756 | ||

|

|

af4d354ff4 | ||

|

|

ca1c7cc556 | ||

|

|

cb2a0b2492 | ||

|

|

185699cb51 | ||

|

|

ce85f8f94d | ||

|

|

39748bdd6c | ||

|

|

dcaf8351b5 | ||

|

|

2fa48b1138 | ||

|

|

02ce6b0204 | ||

|

|

af75858ce8 | ||

|

|

3760217ff0 | ||

|

|

624ada2873 | ||

|

|

e7c64265ae | ||

|

|

f47c83fece | ||

|

|

82601dea24 | ||

|

|

da4f465ed3 | ||

|

|

a5f39da8ae | ||

|

|

016e96d0e6 | ||

|

|

37c305461f | ||

|

|

9f204bf187 | ||

|

|

1c1aedcefe | ||

|

|

268bdcd50c | ||

|

|

8f05f4acb3 | ||

|

|

aafa52db17 | ||

|

|

275c57631b | ||

|

|

61ec9e4365 | ||

|

|

d348ef21db | ||

|

|

b659136f64 | ||

|

|

e568adc825 | ||

|

|

eec1840e8a | ||

|

|

a0de1b1171 | ||

|

|

f6c90578a9 | ||

|

|

3776c00377 | ||

|

|

f572c05a32 | ||

|

|

3895c6bb47 | ||

|

|

2e03056a15 | ||

|

|

eaa5970a0f | ||

|

|

e79e19c614 | ||

|

|

0ef5ac04d8 | ||

|

|

2cb3a6b446 | ||

|

|

d75397d793 | ||

|

|

5b58ed9c26 | ||

|

|

f4c39bbf3c | ||

|

|

e2ce349a30 | ||

|

|

04a6540890 | ||

|

|

b3b7d021c5 | ||

|

|

b396c8f820 | ||

|

|

8cc8f9f0b9 | ||

|

|

3bbe06a55b | ||

|

|

dfe37496f2 | ||

|

|

3fe13f0443 | ||

|

|

a5b5f36298 | ||

|

|

b9e2e51bd7 | ||

|

|

10e63f3e77 | ||

|

|

820044b489 | ||

|

|

89c904abc1 | ||

|

|

c5a3ee01ee | ||

|

|

a5cc99005a | ||

|

|

77ebf0051c | ||

|

|

d5cee7b35b | ||

|

|

3ac96c4ae4 | ||

|

|

60545674c5 | ||

|

|

b5c313e517 | ||

|

|

e3bdad6d77 | ||

|

|

7453afa684 | ||

|

|

86eca6bc7e | ||

|

|

3c0bc69662 | ||

|

|

2baf9a1446 | ||

|

|

296038a3de | ||

|

|

71e1ea5736 | ||

|

|

32d05edb6a | ||

|

|

ba608ff438 | ||

|

|

5d79b687d5 | ||

|

|

ad1ec70e94 | ||

|

|

7e73f81d4e | ||

|

|

9a0ae06c87 | ||

|

|

902fbbf29a | ||

|

|

a0df43484a | ||

|

|

d2317ab908 | ||

|

|

fa733e2285 | ||

|

|

134737d4a8 | ||

|

|

def3141b6d | ||

|

|

057afe2bd5 | ||

|

|

40203f2823 | ||

|

|

9d711e45f9 | ||

|

|

35eb5716a5 | ||

|

|

7a10b85b4c | ||

|

|

7a2e86246d | ||

|

|

331b275105 | ||

|

|

3791fd568c | ||

|

|

6ed9bb0258 | ||

|

|

e66f2fcd2d | ||

|

|

1250f5d25a | ||

|

|

4a3ef70979 | ||

|

|

bf29ee430e | ||

|

|

c7f1e75d22 | ||

|

|

0886712714 | ||

|

|

f415c8bfe9 | ||

|

|

4c1ac0757c | ||

|

|

67a793038b | ||

|

|

05a65dab3c | ||

|

|

0d61e43431 | ||

|

|

b6195603e8 | ||

|

|

6db306cb0c | ||

|

|

48140348e0 | ||

|

|

989574bb52 | ||

|

|

8f3c479642 | ||

|

|

4db464772e | ||

|

|

039d3b4058 | ||

|

|

b99e3ed177 | ||

|

|

6519ba95bc | ||

|

|

6f22932b16 | ||

|

|

bf725dd563 | ||

|

|

dea6700a25 | ||

|

|

b8ccae570e | ||

|

|

17fc6ccc2e | ||

|

|

112f310d13 | ||

|

|

8874589ed0 | ||

|

|

f7621ae336 | ||

|

|

b4cc211763 | ||

|

|

38263de9f1 | ||

|

|

260e3c50df | ||

|

|

57327623d1 | ||

|

|

ff9f1d85ab | ||

|

|

661775d5af | ||

|

|

dd1088d02d | ||

|

|

8d265ad6d2 | ||

|

|

346c530f76 | ||

|

|

870e3ad666 | ||

|

|

e31ff5960c | ||

|

|

3fa71cc94a | ||

|

|

f5ea87da7b | ||

|

|

643695bd2b | ||

|

|

697a9438c6 | ||

|

|

3ad665f80b | ||

|

|

7847eaa64d | ||

|

|

bb870ec90f | ||

|

|

9959e61b35 | ||

|

|

92ead26873 | ||

|

|

afb27ec989 | ||

|

|

ff30b6511c | ||

|

|

56306aeaec | ||

|

|

c2c354044a | ||

|

|

ceb216df8c | ||

|

|

b2994ede8c | ||

|

|

0b87c5085c | ||

|

|

2305d086cc | ||

|

|

6c3c1b377e | ||

|

|

07c4c89720 | ||

|

|

87efb92f07 |

15

.github/workflows/build-app-beta.yaml

vendored

@@ -19,13 +19,13 @@ jobs:

|

||||

env:

|

||||

GITHUB_CONTEXT: ${{ toJson(github) }}

|

||||

run: echo "$GITHUB_CONTEXT"

|

||||

- uses: actions/checkout@v1

|

||||

- uses: actions/checkout@v2

|

||||

with:

|

||||

fetch-depth: 1

|

||||

- name: Use Node.js 10.x

|

||||

- name: Use Node.js 14.x

|

||||

uses: actions/setup-node@v1

|

||||

with:

|

||||

node-version: 10.x

|

||||

node-version: 14.x

|

||||

- name: yarn install

|

||||

run: |

|

||||

yarn install

|

||||

@@ -47,8 +47,8 @@ jobs:

|

||||

yarn run build:app

|

||||

env:

|

||||

GH_TOKEN: ${{ secrets.GH_TOKEN }} # token for electron publish

|

||||

WIN_CSC_LINK: ${{ secrets.WINCERT_CERTIFICATE }}

|

||||

WIN_CSC_KEY_PASSWORD: ${{ secrets.WINCERT_PASSWORD }}

|

||||

# WIN_CSC_LINK: ${{ secrets.WINCERT_CERTIFICATE }}

|

||||

# WIN_CSC_KEY_PASSWORD: ${{ secrets.WINCERT_PASSWORD }}

|

||||

|

||||

- name: Publish Mac

|

||||

if: matrix.os == 'macOS-10.15'

|

||||

@@ -78,8 +78,9 @@ jobs:

|

||||

cp app/dist/*x86*.AppImage artifacts/dbgate-beta.AppImage || true

|

||||

cp app/dist/*arm64*.AppImage artifacts/dbgate-beta-arm64.AppImage || true

|

||||

cp app/dist/*armv7l*.AppImage artifacts/dbgate-beta-armv7l.AppImage || true

|

||||

cp app/dist/*.exe artifacts/dbgate-beta.exe || true

|

||||

cp app/dist/*win*.zip artifacts/dbgate-windows-beta.zip || true

|

||||

cp app/dist/*win*.exe artifacts/dbgate-beta.exe || true

|

||||

cp app/dist/*win_x64.zip artifacts/dbgate-windows-beta.zip || true

|

||||

cp app/dist/*win_arm64.zip artifacts/dbgate-windows-beta-arm64.zip || true

|

||||

cp app/dist/*-mac.dmg artifacts/dbgate-beta.dmg || true

|

||||

cp app/dist/*-mac_arm64.dmg artifacts/dbgate-beta-arm64.dmg || true

|

||||

|

||||

|

||||

9

.github/workflows/build-app.yaml

vendored

@@ -23,13 +23,13 @@ jobs:

|

||||

env:

|

||||

GITHUB_CONTEXT: ${{ toJson(github) }}

|

||||

run: echo "$GITHUB_CONTEXT"

|

||||

- uses: actions/checkout@v1

|

||||

- uses: actions/checkout@v2

|

||||

with:

|

||||

fetch-depth: 1

|

||||

- name: Use Node.js 10.x

|

||||

- name: Use Node.js 14.x

|

||||

uses: actions/setup-node@v1

|

||||

with:

|

||||

node-version: 10.x

|

||||

node-version: 14.x

|

||||

- name: yarn install

|

||||

run: |

|

||||

yarn install

|

||||

@@ -79,7 +79,8 @@ jobs:

|

||||

cp app/dist/*arm64*.AppImage artifacts/dbgate-latest-arm64.AppImage || true

|

||||

cp app/dist/*armv7l*.AppImage artifacts/dbgate-latest-armv7l.AppImage || true

|

||||

cp app/dist/*.exe artifacts/dbgate-latest.exe || true

|

||||

cp app/dist/*win*.zip artifacts/dbgate-windows-latest.zip || true

|

||||

cp app/dist/*win_x64.zip artifacts/dbgate-windows-latest.zip || true

|

||||

cp app/dist/*win_arm64.zip artifacts/dbgate-windows-latest-arm64.zip || true

|

||||

cp app/dist/*-mac.dmg artifacts/dbgate-latest.dmg || true

|

||||

cp app/dist/*-mac_arm64.dmg artifacts/dbgate-latest-arm64.dmg || true

|

||||

|

||||

|

||||

6

.github/workflows/build-docker-beta.yaml

vendored

@@ -21,13 +21,13 @@ jobs:

|

||||

env:

|

||||

GITHUB_CONTEXT: ${{ toJson(github) }}

|

||||

run: echo "$GITHUB_CONTEXT"

|

||||

- uses: actions/checkout@v1

|

||||

- uses: actions/checkout@v2

|

||||

with:

|

||||

fetch-depth: 1

|

||||

- name: Use Node.js 10.x

|

||||

- name: Use Node.js 14.x

|

||||

uses: actions/setup-node@v1

|

||||

with:

|

||||

node-version: 10.x

|

||||

node-version: 14.x

|

||||

- name: yarn install

|

||||

run: |

|

||||

yarn install

|

||||

|

||||

6

.github/workflows/build-docker.yaml

vendored

@@ -27,13 +27,13 @@ jobs:

|

||||

env:

|

||||

GITHUB_CONTEXT: ${{ toJson(github) }}

|

||||

run: echo "$GITHUB_CONTEXT"

|

||||

- uses: actions/checkout@v1

|

||||

- uses: actions/checkout@v2

|

||||

with:

|

||||

fetch-depth: 1

|

||||

- name: Use Node.js 10.x

|

||||

- name: Use Node.js 14.x

|

||||

uses: actions/setup-node@v1

|

||||

with:

|

||||

node-version: 10.x

|

||||

node-version: 14.x

|

||||

- name: yarn install

|

||||

run: |

|

||||

yarn install

|

||||

|

||||

8

.github/workflows/build-npm.yaml

vendored

@@ -6,7 +6,7 @@ on:

|

||||

push:

|

||||

tags:

|

||||

- 'v[0-9]+.[0-9]+.[0-9]+'

|

||||

# - 'v[0-9]+.[0-9]+.[0-9]+-alpha.[0-9]+'

|

||||

- 'v[0-9]+.[0-9]+.[0-9]+-alpha.[0-9]+'

|

||||

|

||||

# on:

|

||||

# push:

|

||||

@@ -27,13 +27,13 @@ jobs:

|

||||

env:

|

||||

GITHUB_CONTEXT: ${{ toJson(github) }}

|

||||

run: echo "$GITHUB_CONTEXT"

|

||||

- uses: actions/checkout@v1

|

||||

- uses: actions/checkout@v2

|

||||

with:

|

||||

fetch-depth: 1

|

||||

- name: Use Node.js 10.x

|

||||

- name: Use Node.js 14.x

|

||||

uses: actions/setup-node@v1

|

||||

with:

|

||||

node-version: 10.x

|

||||

node-version: 14.x

|

||||

|

||||

- name: Configure NPM token

|

||||

env:

|

||||

|

||||

5

.github/workflows/run-tests.yaml

vendored

@@ -3,18 +3,19 @@ on:

|

||||

push:

|

||||

branches:

|

||||

- master

|

||||

- develop

|

||||

|

||||

jobs:

|

||||

test-runner:

|

||||

runs-on: ubuntu-latest

|

||||

container: node:10.18-jessie

|

||||

container: node:14.18

|

||||

|

||||

steps:

|

||||

- name: Context

|

||||

env:

|

||||

GITHUB_CONTEXT: ${{ toJson(github) }}

|

||||

run: echo "$GITHUB_CONTEXT"

|

||||

- uses: actions/checkout@v1

|

||||

- uses: actions/checkout@v2

|

||||

with:

|

||||

fetch-depth: 1

|

||||

- name: yarn install

|

||||

|

||||

136

CHANGELOG.md

@@ -1,6 +1,140 @@

|

||||

# ChangeLog

|

||||

### 4.5.1

|

||||

- FIXED: MongoId detection

|

||||

- FIXED: #203 disabled spellchecker

|

||||

- FIXED: Prevented display filters in form view twice

|

||||

- FIXED: Query designer fixes

|

||||

|

||||

### 4.2.4 - to be released

|

||||

### 4.5.0

|

||||

- ADDED: #220 functions, materialized views and stored procedures in code completion

|

||||

- ADDED: Query result in statusbar

|

||||

- ADDED: Highlight and execute current query

|

||||

- CHANGED: Code completion offers objects only from current query

|

||||

- CHANGED: Big optimalizations of electron app - removed embedded web server, removed remote module, updated electron to version 13

|

||||

- CHANGED: Removed dependency to electron-store module

|

||||

- FIXED: #201 fixed database URL definition, when running from Docvker container

|

||||

- FIXED: #192 Docker container stops in 1 second, ability to stop container with Ctrl+C

|

||||

- CHANGED: Web app - websocket replaced with SSE technology

|

||||

- CHANGED: Changed tab order, tabs are ordered by creation time

|

||||

- ADDED: Reorder tabs with drag & drop

|

||||

- CHANGED: Collapse left column in datagrid - removed from settings, remember last used state

|

||||

- ADDED: Ability to select multiple columns in column manager in datagrid + copy column names

|

||||

- ADDED: Show used filters in left datagrid column

|

||||

- FIXED: Fixed delete dependency cycle detection (delete didn't work for some tables)

|

||||

|

||||

### 4.4.4

|

||||

- FIXED: Database colors

|

||||

- CHANGED: Precise work with MongoDB ObjectId

|

||||

- FIXED: Run macro works on MongoDB collection data editor

|

||||

- ADDED: Type conversion macros

|

||||

- CHANGED: Improved UX of import into current database or current archive

|

||||

- ADDED: Posibility to create string MongoDB IDs when importing into MongoDB collections

|

||||

- CHANGED: Better crash recovery

|

||||

- FIXED: Context menu of data editor when using views - some commands didn't work for views

|

||||

- ADDED: Widget lists (on left side) now supports add operation, where it has sense

|

||||

- CHANGED: Improved UX of saved data sheets

|

||||

- ADDED: deploy - preloadedRows: impelemnted onsertOnly columns

|

||||

- ADDED: Show change log after app upgrade

|

||||

|

||||

### 4.4.3

|

||||

- ADDED: Connection and database colors

|

||||

- ADDED: Ability to pin connection or table

|

||||

- ADDED: MongoDb: create, drop collection from menu

|

||||

- ADDED: Copy as MongoDB insert

|

||||

- ADDED: MongoDB support for multiple statements in script (dbgate-query-splitter)

|

||||

- ADDED: View JSON in tab

|

||||

- ADDED: Open DB model as JSON

|

||||

- ADDED: Open JSON array as data sheet

|

||||

- ADDED: Open JSON from data grid

|

||||

- FIXED: Mongo update command when using string IDs resembling Mongo IDs

|

||||

- CHANGED: Imrpoved add JSON document, change JSON document commands

|

||||

- ADDED: Possibility to add column to JSON grid view

|

||||

- FIXED: Hiding columns #1

|

||||

- REMOVED: Copy JSON document menu command (please use Copy advanced instead)

|

||||

- CHANGED: Save widget visibility and size

|

||||

|

||||

### 4.4.2

|

||||

- ADDED: Open SQL script from SQL confirm

|

||||

- CHANGED: Better looking statusbar

|

||||

- ADDED: Create table from database popup menu

|

||||

- FIXED: Some fixes for DB compare+deploy (eg. #196)

|

||||

- ADDED: Archives + DB models from external directories

|

||||

- ADDED: DB deploy supports preloaded data

|

||||

- ADDED: Support for Command key on Mac (#199)

|

||||

|

||||

### 4.4.1

|

||||

- FIXED: #188 Fixed problem with datetime values in PostgreSQL and mysql

|

||||

- ADDED: #194 Close tabs by DB

|

||||

- FIXED: Improved form view width calculations

|

||||

- CHANGED: Form view - highlight matched columns instead of filtering

|

||||

- ADDED: Lookup distinct values

|

||||

- ADDED: Copy advanced command, Copy as CSV, JSON, YAML, SQL

|

||||

- CHANGED: Hide column manager by default

|

||||

- ADDED: Change database status command

|

||||

- CHANGED: Table structure and view structure tabs have different icons

|

||||

- ADDED: #186 - zoom setting

|

||||

- ADDED: Row count information moved into status bar, when only one grid on tab is used (typical case)

|

||||

|

||||

### 4.4.0

|

||||

- ADDED: Database structure compare, export report to HTML

|

||||

- ADDED: Experimental: Deploy DB structure changes between databases

|

||||

- ADDED: Lookup dialog, available in table view on columns with foreign key

|

||||

- ADDED: Customize foreign key lookups

|

||||

- ADDED: Chart improvements, export charts as HTML page

|

||||

- ADDED: Experimental: work with DB model, deploy model, compare model with real DB

|

||||

- ADDED: #193 new SQLite db command

|

||||

- CHANGED: #190 code completion improvements

|

||||

- ADDED: #189 Copy JSON document - context menu command in data grid for MongoDB

|

||||

- ADDED: #191 Connection to POstgreSQL can be defined also with connection string

|

||||

- ADDED: #187 dbgate-query-splitter: Transform stream support

|

||||

- CHANGED: Upgraded to node 12 in docker app

|

||||

- FIXED: Upgraded to node 12 in docker app

|

||||

- FIXED: Fixed import into SQLite and PostgreSQL databases, added integration test for this

|

||||

|

||||

### 4.3.4

|

||||

- FIXED: Delete row with binary ID in MySQL (#182)

|

||||

- ADDED: Using 'ODBC Driver 17 for SQL Server' or 'SQL Server Native Client 11.0', when connecting to MS SQL using windows auth #183

|

||||

|

||||

### 4.3.3

|

||||

- ADDED: Generate SQL from data (#176 - Copy row as INSERT/UPDATE statement)

|

||||

- ADDED: Datagrid keyboard column operations (Ctrl+F - find column, Ctrl+H - hide column) #180

|

||||

- FIXED: Make window remember that it was maximized

|

||||

- FIXED: Fixed lost focus after copy to clipboard and after inserting SQL join

|

||||

|

||||

### 4.3.2

|

||||

- FIXED: Sorted database list in PostgreSQL (#178)

|

||||

- FIXED: Loading stricture of PostgreSQL database, when it contains indexes on expressions (#175)

|

||||

- ADDED: Hotkey Shift+Alt+F for formatting SQL code

|

||||

|

||||

### 4.3.1

|

||||

- FIXED: #173 Using key phrase for SSH key file connection

|

||||

- ADDED: #172 Abiloity to quick search within database names

|

||||

- ADDED: Database search added to command palette (Ctrl+P)

|

||||

- FIXED: #171 fixed PostgreSQL analyser for older versions than 9.3 (matviews don't exist)

|

||||

- ADDED: DELETE cascade option - ability to delete all referenced rows, when deleting rows

|

||||

|

||||

### 4.3.0

|

||||

- ADDED: Table structure editor

|

||||

- ADDED: Index support

|

||||

- ADDED: Unique constraint support

|

||||

- ADDED: Context menu for drop/rename table/columns and for drop view/procedure/function

|

||||

- ADDED: Added support for Windows arm64 platform

|

||||

- FIXED: Search by _id in MongoDB

|

||||

|

||||

### 4.2.6

|

||||

- FIXED: Fixed MongoDB import

|

||||

- ADDED: Configurable thousands separator #136

|

||||

- ADDED: Using case insensitive text search in postgres

|

||||

|

||||

### 4.2.5

|

||||

- FIXED: Fixed crash when using large model on some installations

|

||||

- FIXED: Postgre SQL CREATE function

|

||||

- FIXED: Analysing of MySQL when modifyDate is not known

|

||||

|

||||

### 4.2.4

|

||||

- ADDED: Query history

|

||||

- ADDED: One-click exports in desktop app

|

||||

- ADDED: JSON array export

|

||||

- FIXED: Procedures in PostgreSQL #122

|

||||

- ADDED: Support of materialized views for PostgreSQL #123

|

||||

- ADDED: Integration tests

|

||||

|

||||

80

README.md

@@ -4,15 +4,21 @@

|

||||

[](https://snapcraft.io/dbgate)

|

||||

[](https://github.com/prettier/prettier)

|

||||

|

||||

# DbGate - database administration tool

|

||||

<img src="https://raw.githubusercontent.com/dbgate/dbgate/master/app/icon.png" width="64" align="right"/>

|

||||

|

||||

DbGate modern, fast and easy to use database manager

|

||||

# DbGate - (no)SQL database client

|

||||

|

||||

DbGate is cross-platform database manager.

|

||||

It's designed to be simple to use and effective, when working with more databases simultaneously.

|

||||

But there are also many advanced features like schema compare, visual query designer, chart visualisation or batch export and import.

|

||||

|

||||

DbGate is licensed under MIT license and is completely free.

|

||||

|

||||

* Try it online - [demo.dbgate.org](https://demo.dbgate.org) - online demo application

|

||||

* Download application for Windows, Linux or Mac from [dbgate.org](https://dbgate.org/download/)

|

||||

* **Download** application for Windows, Linux or Mac from [dbgate.org](https://dbgate.org/download/)

|

||||

* Run web version as [NPM package](https://www.npmjs.com/package/dbgate) or as [docker image](https://hub.docker.com/r/dbgate/dbgate)

|

||||

|

||||

Supported databases:

|

||||

## Supported databases

|

||||

* MySQL

|

||||

* PostgreSQL

|

||||

* SQL Server

|

||||

@@ -22,12 +28,30 @@ Supported databases:

|

||||

* CockroachDB

|

||||

* MariaDB

|

||||

|

||||

|

||||

<!-- Learn more about DbGate features at the [DbGate website](https://dbgate.org/), or try our online [demo application](https://demo.dbgate.org) -->

|

||||

|

||||

|

||||

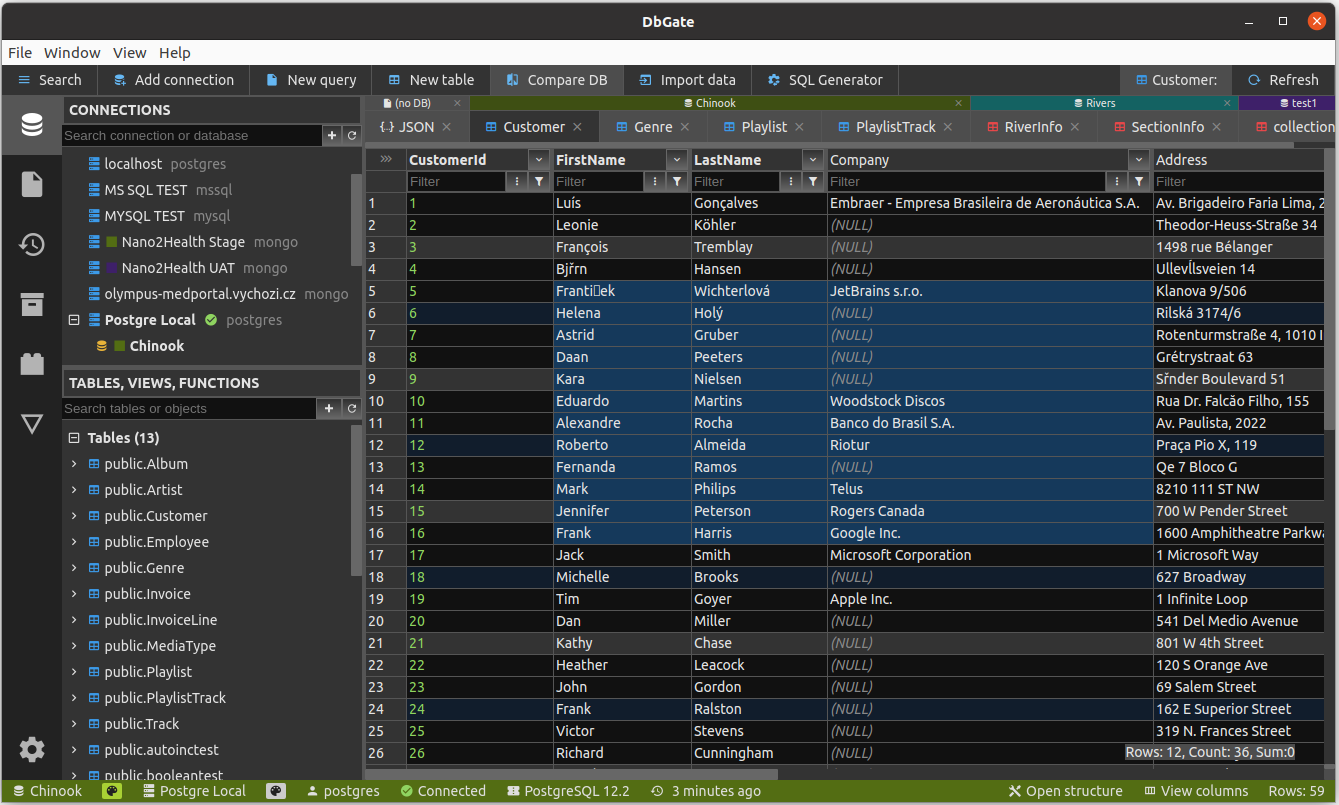

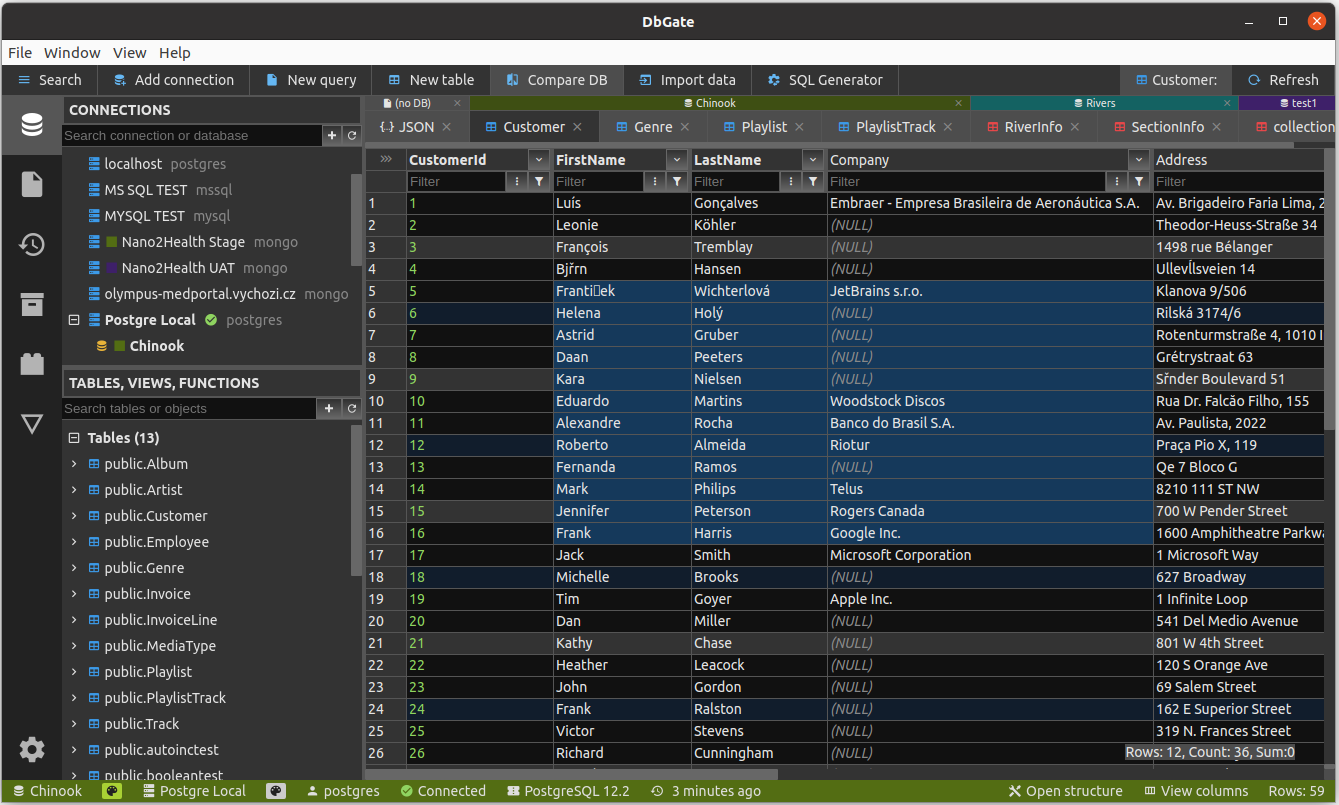

<a href="https://raw.githubusercontent.com/dbgate/dbgate/master/img/screenshot1.png">

|

||||

<img src="https://raw.githubusercontent.com/dbgate/dbgate/master/img/screenshot1.png" width="400"/>

|

||||

</a>

|

||||

<a href="https://raw.githubusercontent.com/dbgate/dbgate/master/img/screenshot2.png">

|

||||

<img src="https://raw.githubusercontent.com/dbgate/dbgate/master/img/screenshot2.png" width="400"/>

|

||||

</a>

|

||||

<a href="https://raw.githubusercontent.com/dbgate/dbgate/master/img/screenshot4.png">

|

||||

<img src="https://raw.githubusercontent.com/dbgate/dbgate/master/img/screenshot4.png" width="400"/>

|

||||

</a>

|

||||

<a href="https://raw.githubusercontent.com/dbgate/dbgate/master/img/screenshot3.png">

|

||||

<img src="https://raw.githubusercontent.com/dbgate/dbgate/master/img/screenshot3.png" width="400"/>

|

||||

</a>

|

||||

|

||||

<!--  -->

|

||||

|

||||

## Features

|

||||

* Table data editing, with SQL change script preview

|

||||

* Edit table schema, indexes, primary and foreign keys

|

||||

* Compare and synchronize database structure

|

||||

* Light and dark theme

|

||||

* Master/detail views

|

||||

* Master/detail views, foreign key lookups

|

||||

* Query designer

|

||||

* Form view for comfortable work with tables with many columns

|

||||

* JSON view on MongoDB collections

|

||||

@@ -42,20 +66,25 @@ Supported databases:

|

||||

* Import, export from/to CSV, Excel, JSON

|

||||

* Free table editor - quick table data editing (cleanup data after import/before export, prototype tables etc.)

|

||||

* Archives - backup your data in JSON files on local filesystem (or on DbGate server, when using web application)

|

||||

* Charts

|

||||

* Charts, export chart to HTML page

|

||||

* For detailed info, how to run DbGate in docker container, visit [docker hub](https://hub.docker.com/r/dbgate/dbgate)

|

||||

* Extensible plugin architecture

|

||||

|

||||

## How to contribute

|

||||

Any contributions are welcome. If you want to contribute without coding, consider following:

|

||||

* Create issue, if you find problem in app, or you have idea to new feature. If issue already exists, you could leave comment on it, to prioritise most wanted issues.

|

||||

|

||||

* Tell your friends about DbGate or share on social networks - when more people will use DbGate, it will grow to be better

|

||||

* Write review on [Slant.co](https://www.slant.co/improve/options/41086/~dbgate-review) or [G2](https://www.g2.com/products/dbgate/reviews)

|

||||

* Create issue, if you find problem in app, or you have idea to new feature. If issue already exists, you could leave comment on it, to prioritise most wanted issues.

|

||||

* Become a backer on [Open collective](https://opencollective.com/dbgate)

|

||||

|

||||

Thank you!

|

||||

|

||||

## Why is DbGate different

|

||||

There are many database managers now, so why DbGate?

|

||||

* Works everywhere - Windows, Linux, Mac, Web browser (+mobile web is planned), without compromises in features

|

||||

* Based on standalone NPM packages, scripts can be run without DbGate (example - [CSV export](https://www.npmjs.com/package/dbgate-plugin-csv) )

|

||||

* Many data browsing functions based using foreign keys - master/detail, expand columns, expandable form view (on screenshot above)

|

||||

* Many data browsing functions based using foreign keys - master/detail, expand columns, expandable form view

|

||||

|

||||

## Design goals

|

||||

* Application simplicity - DbGate takes the best and only the best from old [DbGate](http://www.jenasoft.com/dbgate), [DatAdmin](http://www.jenasoft.com/datadmin) and [DbMouse](http://www.jenasoft.com/dbmouse) .

|

||||

@@ -64,9 +93,9 @@ There are many database managers now, so why DbGate?

|

||||

* Backend - NodeJs, ExpressJs, socket.io, database connection drivers

|

||||

* JavaScript + TypeScript

|

||||

* App - electron

|

||||

* Platform independed - will run as web application in single docker container on server, or as application using Electron platform on Linux, Windows and Mac

|

||||

* Platform independent - runs as web application in single docker container on server, or as application using Electron platform on Linux, Windows and Mac

|

||||

|

||||

## Plugins

|

||||

<!-- ## Plugins

|

||||

Plugins are standard NPM packages published on [npmjs.com](https://www.npmjs.com).

|

||||

See all [existing DbGate plugins](https://www.npmjs.com/search?q=keywords:dbgateplugin).

|

||||

Visit [dbgate generator homepage](https://github.com/dbgate/generator-dbgate) to see, how to create your own plugin.

|

||||

@@ -75,31 +104,46 @@ Currently following extensions can be implemented using plugins:

|

||||

- File format parsers/writers

|

||||

- Database engine connectors

|

||||

|

||||

Basic set of plugins is part of DbGate git repository and is installed with app. Additional plugins pust be downloaded from NPM (this task is handled by DbGate)

|

||||

Basic set of plugins is part of DbGate git repository and is installed with app. Additional plugins pust be downloaded from NPM (this task is handled by DbGate) -->

|

||||

|

||||

## How to run development environment

|

||||

|

||||

Simple variant - runs WEB application:

|

||||

```sh

|

||||

yarn

|

||||

yarn start

|

||||

```

|

||||

|

||||

If you want to make modifications in libraries or plugins, run library compiler in watch mode in the second terminal:

|

||||

If you want more control, run WEB application:

|

||||

```sh

|

||||

yarn lib

|

||||

yarn # install NPM packages

|

||||

```

|

||||

|

||||

And than run following 3 commands concurrently in 3 terminals:

|

||||

```

|

||||

yarn start:api # run API on port 3000

|

||||

yarn start:web # run web on port 5000

|

||||

yarn lib # watch typescript libraries and plugins modifications

|

||||

```

|

||||

This runs API on port 3000 and web application on port 5000

|

||||

Open http://localhost:5000 in your browser

|

||||

|

||||

You could run electron app (requires running localhost:5000):

|

||||

If you want to run electron app:

|

||||

```sh

|

||||

yarn # install NPM packages

|

||||

cd app

|

||||

yarn

|

||||

yarn start

|

||||

yarn # install NPM packages for electron

|

||||

```

|

||||

|

||||

And than run following 3 commands concurrently in 3 terminals:

|

||||

```

|

||||

yarn start:web # run web on port 5000 (only static JS and HTML files)

|

||||

yarn lib # watch typescript libraries and plugins modifications

|

||||

yarn start:app # run electron app

|

||||

```

|

||||

|

||||

## How to run built electron app locally

|

||||

This mode is very similar to production run of electron app. Electron app forks process with API on dynamically allocated port, works with compiled javascript files and uses compiled version of plugins (doesn't use localhost:5000)

|

||||

This mode is very similar to production run of electron app. Electron doesn't use localhost:5000.

|

||||

|

||||

```sh

|

||||

cd app

|

||||

|

||||

BIN

app/icons/128x128.png

Normal file

|

After Width: | Height: | Size: 12 KiB |

BIN

app/icons/16x16.png

Normal file

|

After Width: | Height: | Size: 1.4 KiB |

BIN

app/icons/256x256.png

Normal file

|

After Width: | Height: | Size: 24 KiB |

BIN

app/icons/32x32.png

Normal file

|

After Width: | Height: | Size: 1.9 KiB |

BIN

app/icons/48x48.png

Normal file

|

After Width: | Height: | Size: 3.0 KiB |

BIN

app/icons/512x512.png

Normal file

|

After Width: | Height: | Size: 70 KiB |

BIN

app/icons/64x64.png

Normal file

|

After Width: | Height: | Size: 4.6 KiB |

@@ -5,10 +5,8 @@

|

||||

"author": "Jan Prochazka <jenasoft.database@gmail.com>",

|

||||

"description": "Opensource database administration tool",

|

||||

"dependencies": {

|

||||

"better-sqlite3-with-prebuilds": "^7.1.8",

|

||||

"electron-log": "^4.3.1",

|

||||

"electron-store": "^5.1.1",

|

||||

"electron-updater": "^4.3.5",

|

||||

"electron-log": "^4.4.1",

|

||||

"electron-updater": "^4.6.1",

|

||||

"patch-package": "^6.4.7"

|

||||

},

|

||||

"repository": {

|

||||

@@ -45,7 +43,7 @@

|

||||

]

|

||||

}

|

||||

],

|

||||

"icon": "icon.png",

|

||||

"icon": "icons/",

|

||||

"category": "Development",

|

||||

"synopsis": "Database manager for SQL Server, MySQL, PostgreSQL, MongoDB and SQLite",

|

||||

"publish": [

|

||||

@@ -68,7 +66,13 @@

|

||||

"win": {

|

||||

"target": [

|

||||

"nsis",

|

||||

"zip"

|

||||

{

|

||||

"target": "zip",

|

||||

"arch": [

|

||||

"x64",

|

||||

"arm64"

|

||||

]

|

||||

}

|

||||

],

|

||||

"icon": "icon.ico",

|

||||

"publish": [

|

||||

@@ -84,23 +88,25 @@

|

||||

},

|

||||

"homepage": "./",

|

||||

"scripts": {

|

||||

"start": "cross-env ELECTRON_START_URL=http://localhost:5000 electron .",

|

||||

"start": "cross-env ELECTRON_START_URL=http://localhost:5000 DEVMODE=1 electron .",

|

||||

"start:local": "cross-env electron .",

|

||||

"dist": "electron-builder",

|

||||

"build": "cd ../packages/api && yarn build && cd ../web && yarn build && cd ../../app && yarn dist",

|

||||

"build:mac": "cd ../packages/api && yarn build && cd ../web && yarn build && cd ../../app && node setMacPlatform x64 && yarn dist && node setMacPlatform arm64 && yarn dist",

|

||||

"build:local": "cd ../packages/api && yarn build && cd ../web && yarn build && cd ../../app && yarn predist",

|

||||

"postinstall": "electron-builder install-app-deps && patch-package",

|

||||

"postinstall": "yarn rebuild && patch-package",

|

||||

"rebuild": "electron-builder install-app-deps",

|

||||

"predist": "copyfiles ../packages/api/dist/* packages && copyfiles \"../packages/web/public/*\" packages && copyfiles \"../packages/web/public/**/*\" packages && copyfiles --up 3 \"../plugins/dist/**/*\" packages/plugins"

|

||||

},

|

||||

"main": "src/electron.js",

|

||||

"devDependencies": {

|

||||

"copyfiles": "^2.2.0",

|

||||

"cross-env": "^6.0.3",

|

||||

"electron": "11.2.3",

|

||||

"electron-builder": "22.10.5"

|

||||

"electron": "13.6.3",

|

||||

"electron-builder": "22.14.5"

|

||||

},

|

||||

"optionalDependencies": {

|

||||

"msnodesqlv8": "^2.0.10"

|

||||

"better-sqlite3": "7.4.5",

|

||||

"msnodesqlv8": "^2.4.4"

|

||||

}

|

||||

}

|

||||

}

|

||||

|

||||

@@ -1,9 +1,8 @@

|

||||

const electron = require('electron');

|

||||

const os = require('os');

|

||||

const fs = require('fs');

|

||||

const { Menu, ipcMain } = require('electron');

|

||||

const { fork } = require('child_process');

|

||||

const { autoUpdater } = require('electron-updater');

|

||||

const Store = require('electron-store');

|

||||

const log = require('electron-log');

|

||||

|

||||

// Module to control application life.

|

||||

@@ -14,7 +13,17 @@ const BrowserWindow = electron.BrowserWindow;

|

||||

const path = require('path');

|

||||

const url = require('url');

|

||||

|

||||

const store = new Store();

|

||||

// require('@electron/remote/main').initialize();

|

||||

|

||||

const configRootPath = path.join(app.getPath('userData'), 'config-root.json');

|

||||

let initialConfig = {};

|

||||

|

||||

try {

|

||||

initialConfig = JSON.parse(fs.readFileSync(configRootPath, { encoding: 'utf-8' }));

|

||||

} catch (err) {

|

||||

console.log('Error loading config-root:', err.message);

|

||||

initialConfig = {};

|

||||

}

|

||||

|

||||

// Keep a global reference of the window object, if you don't, the window will

|

||||

// be closed automatically when the JavaScript object is garbage collected.

|

||||

@@ -36,7 +45,7 @@ function commandItem(id) {

|

||||

accelerator: command ? command.keyText : undefined,

|

||||

enabled: command ? command.enabled : false,

|

||||

click() {

|

||||

mainWindow.webContents.executeJavaScript(`dbgate_runCommand('${id}')`);

|

||||

mainWindow.webContents.send('run-command', id);

|

||||

},

|

||||

};

|

||||

}

|

||||

@@ -47,11 +56,19 @@ function buildMenu() {

|

||||

label: 'File',

|

||||

submenu: [

|

||||

commandItem('new.connection'),

|

||||

commandItem('new.sqliteDatabase'),

|

||||

commandItem('new.modelCompare'),

|

||||

commandItem('new.freetable'),

|

||||

{ type: 'separator' },

|

||||

commandItem('file.open'),

|

||||

commandItem('file.openArchive'),

|

||||

{ type: 'separator' },

|

||||

commandItem('group.save'),

|

||||

commandItem('group.saveAs'),

|

||||

commandItem('database.search'),

|

||||

{ type: 'separator' },

|

||||

{ role: 'close' },

|

||||

commandItem('tabs.closeTab'),

|

||||

commandItem('file.exit'),

|

||||

],

|

||||

},

|

||||

{

|

||||

@@ -91,27 +108,28 @@ function buildMenu() {

|

||||

{

|

||||

label: 'dbgate.org',

|

||||

click() {

|

||||

require('electron').shell.openExternal('https://dbgate.org');

|

||||

electron.shell.openExternal('https://dbgate.org');

|

||||

},

|

||||

},

|

||||

{

|

||||

label: 'DbGate on GitHub',

|

||||

click() {

|

||||

require('electron').shell.openExternal('https://github.com/dbgate/dbgate');

|

||||

electron.shell.openExternal('https://github.com/dbgate/dbgate');

|

||||

},

|

||||

},

|

||||

{

|

||||

label: 'DbGate on docker hub',

|

||||

click() {

|

||||

require('electron').shell.openExternal('https://hub.docker.com/r/dbgate/dbgate');

|

||||

electron.shell.openExternal('https://hub.docker.com/r/dbgate/dbgate');

|

||||

},

|

||||

},

|

||||

{

|

||||

label: 'Report problem or feature request',

|

||||

click() {

|

||||

require('electron').shell.openExternal('https://github.com/dbgate/dbgate/issues/new');

|

||||

electron.shell.openExternal('https://github.com/dbgate/dbgate/issues/new');

|

||||

},

|

||||

},

|

||||

commandItem('tabs.changelog'),

|

||||

commandItem('about.show'),

|

||||

],

|

||||

},

|

||||

@@ -137,10 +155,27 @@ ipcMain.on('update-commands', async (event, arg) => {

|

||||

menu.enabled = command.enabled;

|

||||

}

|

||||

});

|

||||

ipcMain.on('close-window', async (event, arg) => {

|

||||

mainWindow.close();

|

||||

});

|

||||

|

||||

ipcMain.handle('showOpenDialog', async (event, options) => {

|

||||

const res = electron.dialog.showOpenDialogSync(mainWindow, options);

|

||||

return res;

|

||||

});

|

||||

ipcMain.handle('showSaveDialog', async (event, options) => {

|

||||

const res = electron.dialog.showSaveDialogSync(mainWindow, options);

|

||||

return res;

|

||||

});

|

||||

ipcMain.handle('showItemInFolder', async (event, path) => {

|

||||

electron.shell.showItemInFolder(path);

|

||||

});

|

||||

ipcMain.handle('openExternal', async (event, url) => {

|

||||

electron.shell.openExternal(url);

|

||||

});

|

||||

|

||||

function createWindow() {

|

||||

const bounds = store.get('winBounds');

|

||||

|

||||

const bounds = initialConfig['winBounds'];

|

||||

mainWindow = new BrowserWindow({

|

||||

width: 1200,

|

||||

height: 800,

|

||||

@@ -149,10 +184,15 @@ function createWindow() {

|

||||

icon: os.platform() == 'win32' ? 'icon.ico' : path.resolve(__dirname, '../icon.png'),

|

||||

webPreferences: {

|

||||

nodeIntegration: true,

|

||||

enableRemoteModule: true,

|

||||

contextIsolation: false,

|

||||

spellcheck: false,

|

||||

},

|

||||

});

|

||||

|

||||

if (initialConfig['winIsMaximized']) {

|

||||

mainWindow.maximize();

|

||||

}

|

||||

|

||||

mainMenu = buildMenu();

|

||||

mainWindow.setMenu(mainMenu);

|

||||

|

||||

@@ -164,11 +204,19 @@ function createWindow() {

|

||||

protocol: 'file:',

|

||||

slashes: true,

|

||||

});

|

||||

mainWindow.webContents.on('did-finish-load', function () {

|

||||

// hideSplash();

|

||||

});

|

||||

mainWindow.on('close', () => {

|

||||

store.set('winBounds', mainWindow.getBounds());

|

||||

try {

|

||||

fs.writeFileSync(

|

||||

configRootPath,

|

||||

JSON.stringify({

|

||||

winBounds: mainWindow.getBounds(),

|

||||

winIsMaximized: mainWindow.isMaximized(),

|

||||

}),

|

||||

'utf-8'

|

||||

);

|

||||

} catch (err) {

|

||||

console.log('Error saving config-root:', err.message);

|

||||

}

|

||||

});

|

||||

mainWindow.loadURL(startUrl);

|

||||

if (os.platform() == 'linux') {

|

||||

@@ -176,31 +224,27 @@ function createWindow() {

|

||||

}

|

||||

}

|

||||

|

||||

if (process.env.ELECTRON_START_URL) {

|

||||

loadMainWindow();

|

||||

} else {

|

||||

const apiProcess = fork(path.join(__dirname, '../packages/api/dist/bundle.js'), [

|

||||

'--dynport',

|

||||

'--is-electron-bundle',

|

||||

'--native-modules',

|

||||

path.join(__dirname, 'nativeModules'),

|

||||

// '../../../src/nativeModules'

|

||||

]);

|

||||

apiProcess.on('message', msg => {

|

||||

if (msg.msgtype == 'listening') {

|

||||

const { port, authorization } = msg;

|

||||

global['port'] = port;

|

||||

global['authorization'] = authorization;

|

||||

loadMainWindow();

|

||||

}

|

||||

});

|

||||

}

|

||||

const apiPackage = path.join(

|

||||

__dirname,

|

||||

process.env.DEVMODE ? '../../packages/api/src/index' : '../packages/api/dist/bundle.js'

|

||||

);

|

||||

|

||||

// and load the index.html of the app.

|

||||

// mainWindow.loadURL('http://localhost:3000');

|

||||

global.API_PACKAGE = apiPackage;

|

||||

global.NATIVE_MODULES = path.join(__dirname, 'nativeModules');

|

||||

|

||||

// Open the DevTools.

|

||||

// mainWindow.webContents.openDevTools();

|

||||

// console.log('global.API_PACKAGE', global.API_PACKAGE);

|

||||

const api = require(apiPackage);

|

||||

// console.log(

|

||||

// 'REQUIRED',

|

||||

// path.resolve(

|

||||

// path.join(__dirname, process.env.DEVMODE ? '../../packages/api/src/index' : '../packages/api/dist/bundle.js')

|

||||

// )

|

||||

// );

|

||||

const main = api.getMainModule();

|

||||

main.initializeElectronSender(mainWindow.webContents);

|

||||

main.useAllControllers(null, electron);

|

||||

|

||||

loadMainWindow();

|

||||

|

||||

// Emitted when the window is closed.

|

||||

mainWindow.on('closed', function () {

|

||||

@@ -212,7 +256,9 @@ function createWindow() {

|

||||

}

|

||||

|

||||

function onAppReady() {

|

||||

autoUpdater.checkForUpdatesAndNotify();

|

||||

if (!process.env.DEVMODE) {

|

||||

autoUpdater.checkForUpdatesAndNotify();

|

||||

}

|

||||

createWindow();

|

||||

}

|

||||

|

||||

|

||||

1643

app/yarn.lock

@@ -1,4 +1,4 @@

|

||||

FROM node:12-alpine

|

||||

FROM node:14-alpine

|

||||

|

||||

WORKDIR /home/dbgate-docker

|

||||

|

||||

|

||||

@@ -5,7 +5,7 @@ let fillContent = '';

|

||||

if (process.platform == 'win32') {

|

||||

fillContent += `content.msnodesqlv8 = () => require('msnodesqlv8');`;

|

||||

}

|

||||

fillContent += `content['better-sqlite3-with-prebuilds'] = () => require('better-sqlite3-with-prebuilds');`;

|

||||

fillContent += `content['better-sqlite3'] = () => require('better-sqlite3');`;

|

||||

|

||||

const getContent = (empty) => `

|

||||

// this file is generated automatically by script fillNativeModules.js, do not edit it manually

|

||||

|

||||

BIN

img/screenshot1.png

Normal file

|

After Width: | Height: | Size: 279 KiB |

BIN

img/screenshot2.png

Normal file

|

After Width: | Height: | Size: 228 KiB |

BIN

img/screenshot3.png

Normal file

|

After Width: | Height: | Size: 148 KiB |

BIN

img/screenshot4.png

Normal file

|

After Width: | Height: | Size: 204 KiB |

68

integration-tests/__tests__/alter-database.spec.js

Normal file

@@ -0,0 +1,68 @@

|

||||

const stableStringify = require('json-stable-stringify');

|

||||

const _ = require('lodash');

|

||||

const fp = require('lodash/fp');

|

||||

const uuidv1 = require('uuid/v1');

|

||||

const { testWrapper } = require('../tools');

|

||||

const engines = require('../engines');

|

||||

const { getAlterDatabaseScript, extendDatabaseInfo, generateDbPairingId } = require('dbgate-tools');

|

||||

|

||||

function flatSource() {

|

||||

return _.flatten(

|

||||

engines.map(engine => (engine.objects || []).map(object => [engine.label, object.type, object, engine]))

|

||||

);

|

||||

}

|

||||

|

||||

async function testDatabaseDiff(conn, driver, mangle, createObject = null) {

|

||||

await driver.query(conn, `create table t1 (id int not null primary key)`);

|

||||

|

||||

await driver.query(

|

||||

conn,

|

||||

`create table t2 (

|

||||

id int not null primary key,

|

||||

t1_id int null references t1(id)

|

||||

)`

|

||||

);

|

||||

|

||||

if (createObject) await driver.query(conn, createObject);

|

||||

|

||||

const structure1 = generateDbPairingId(extendDatabaseInfo(await driver.analyseFull(conn)));

|

||||

let structure2 = _.cloneDeep(structure1);

|

||||

mangle(structure2);

|

||||

structure2 = extendDatabaseInfo(structure2);

|

||||

|

||||

const { sql } = getAlterDatabaseScript(structure1, structure2, {}, structure1, structure2, driver);

|

||||

console.log('RUNNING ALTER SQL', driver.engine, ':', sql);

|

||||

|

||||

await driver.script(conn, sql);

|

||||

|

||||

const structure2Real = extendDatabaseInfo(await driver.analyseFull(conn));

|

||||

|

||||

expect(structure2Real.tables.length).toEqual(structure2.tables.length);

|

||||

return structure2Real;

|

||||

}

|

||||

|

||||

describe('Alter database', () => {

|

||||

test.each(engines.map(engine => [engine.label, engine]))(

|

||||

'Drop referenced table - %s',

|

||||

testWrapper(async (conn, driver, engine) => {

|

||||

await testDatabaseDiff(conn, driver, db => {

|

||||

_.remove(db.tables, x => x.pureName == 't1');

|

||||

});

|

||||

})

|

||||

);

|

||||

|

||||

test.each(flatSource())(

|

||||

'Drop object - %s - %s',

|

||||

testWrapper(async (conn, driver, type, object, engine) => {

|

||||

const db = await testDatabaseDiff(

|

||||

conn,

|

||||

driver,

|

||||

db => {

|

||||

_.remove(db[type], x => x.pureName == 'obj1');

|

||||

},

|

||||

object.create1

|

||||

);

|

||||

expect(db[type].length).toEqual(0);

|

||||

})

|

||||

);

|

||||

});

|

||||

124

integration-tests/__tests__/alter-table.spec.js

Normal file

@@ -0,0 +1,124 @@

|

||||

const stableStringify = require('json-stable-stringify');

|

||||

const _ = require('lodash');

|

||||

const fp = require('lodash/fp');

|

||||

const uuidv1 = require('uuid/v1');

|

||||

const { testWrapper } = require('../tools');

|

||||

const engines = require('../engines');

|

||||

const { getAlterTableScript, extendDatabaseInfo, generateDbPairingId } = require('dbgate-tools');

|

||||

|

||||

function pickImportantTableInfo(table) {

|

||||

return {

|

||||